A History of Unseen Killers: Drones, AI Integration and Policy

Laughing through clouds, his milk-teeth still unshed,

Cities and men he smote from overhead.

His deaths delivered, he returned to play

Childlike, with childish things now put away.

— Rudyard Kipling, R.A.F. (Aged Eighteen)

Death began as a human affair. Throughout history, organised political violence was waged by humans against other humans. Yet throughout this history, from hoplite phalanxes to strategic airbombers, military thinkers sought to extend force projection beyond the limits of the individual soldier. Modern warfare has steadily redistributed agency to systems of sensors, datalinks, algorithms, analysts, and distant decision-makers, turning killing into an administrative outcome. Technology promises politically rewarding control and distance; civilians bear a disproportionate share of the resulting risk.

This essay examines the history of drone warfare and the technological evolution of it, especially regarding AI and automation. It then turns to two case studies: U.S. post-9/11 targeted killing campaigns and drone use in Ukraine. Throughout, it considers the impacts of remote war on power – the power to kill, to observe, to classify human life, and to dissolve accountability.

The history of drone warfare reveals a specific formulation of political power: the capacity to project lethal force, especially at a distance, while also dispersing responsibility for the resulting deaths across technical systems and institutions. Read as such, drone warfare reconfigures sovereign power by separating political liability and operational advantage, intensifying modes of control which obscure agency and transform human lives into data.

1) What is a Drone Strike?

A modern militarised drone is best understood as a killing system, where the aircraft is only one component of an increasingly complicated and technical stack:

● Sensing layer: optical and infrared cameras along with radar and other modalities, each contributing full-motion video and relevant metadata

● Positioning and navigation: Global Navigation Satellite System (GNSS) when available, otherwise the use of inertial navigation, more contemporaneously including sensor-fusion approaches (visual-inertial odometry, LiDAR-visual fusion, cooperative navigation) to ensure continued operation in GPS-denied environments

● Communications: line-of-sight links, relays, SATCOM; ad-hoc and multi-drone architectures

● Command and Control (C2): mission software and ground control stations which integrate ISR feeds into strike authority constrained by the rules of engagement

● Decision layer: humans and machines classifying targets, tracking them , planning routes, and scoring threats

● Effects layer: the munitions delivery system (missiles, loitering munitions, FPV drones)

Importantly, moral hazard is endemic to autonomous warfare because of two engineering goals: the reduction of bandwidth used by operators and the reduction of time-to-strike. While such goals may be militarily sensible, they also compress decision time and reduce civilian safety. Such compression of decision-making highlights power as a form of acceleration: the ability to act faster than ethical, legal, or political scrutiny can give an actor a strategic advantage over an opponent.

This is expressed plainly in reports by the Defense Advanced Research Projects Agency (DARPA). The DARPA program “CODE” seeks to create algorithms that allow unmanned aircraft to operate in denied or contested environments while simultaneously reducing required communications and any cognitive burden on human supervisors. Similarly, the program “OFFSET” envisions potential swarms of up to 250 small unmanned (aerial) systems, achieved through autonomous human-drone control dyads.

2) Technical Arc

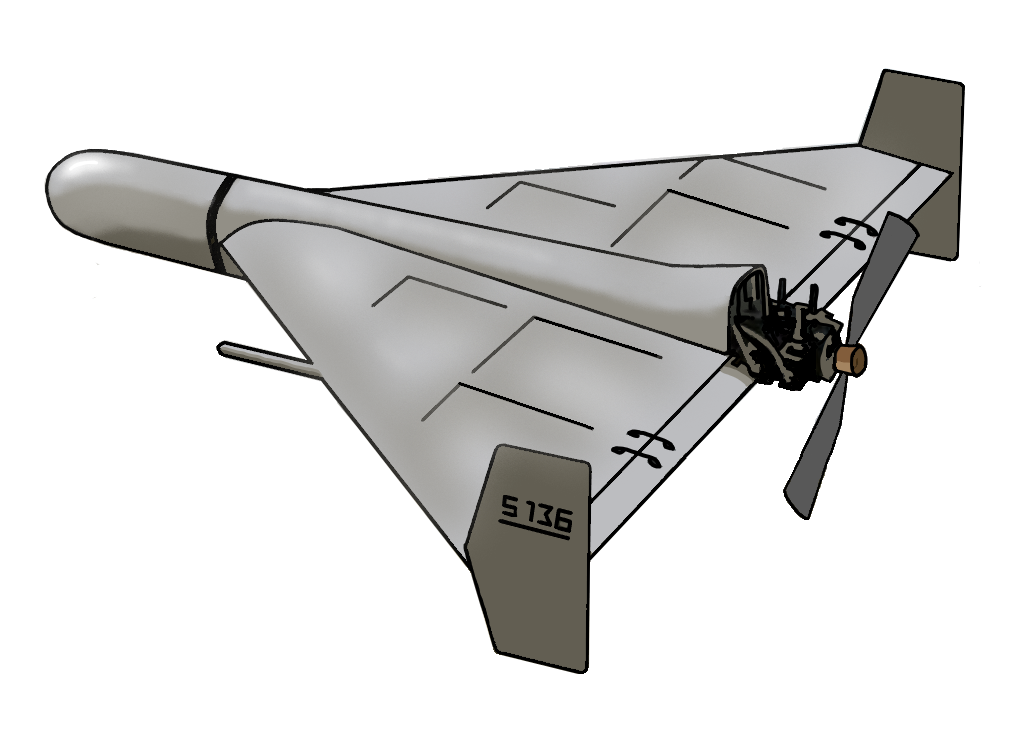

The earliest concepts of drones were not intelligent, focusing rather on unmanned munitions, or the delivery of violence without manifested human presence. Austria’s use of balloon bombs against Venice in 1849 is often cited as the earliest remote aerial attack. Later, early 20th century systems like the US Kettering “Bug” attempted to mechanise flight paths and impact without the presence of a pilot. By the lead-up to WWII, radio-controlled aircraft like the DH.82B Queen Bee solidified the presence of unmanned flight and popularised the ‘drone’ label. These developments parallel earlier historical changes in the delegation of violence, such as the innovations in artillery and strategic bombing. In each case, however, while distance and abstraction diluted immediate responsibility, agency was not removed. Drones fit, therefore, into a long historical tradition of distancing the wielders of force from its physical consequences, but accelerate and institutionalise that distance in unprecedented ways.

By the Cold War, drones became a central part of reconnaissance, as risks could be outsourced to machines. The first large-scale use of reconnaissance drones was in Vietnam, with rapid expansion thereafter. The Israeli use of UAVs during a 1982 invasion of Lebanon demonstrated that unmanned platforms could support warfare, as the IAI Scout and Tadiran

Mastiff systems were used to identify Syrian radar frequencies, or as decoys to waste surface-to-air missile (SAM) stocks. Post-9/11, technological innovations moved beyond merely arming drones to closing the loop: combining persistent surveillance with rapid strike capability and continuous assessment. Drones thus became part of durable overhead control.

In the last few years, there was another inflection point in the use of drones: scale and autonomy development. In Ukraine, drones are produced and expended in a manner which resembles ammunition. Further, AI is increasingly integrated (often quietly and incrementally) to keep drones effective despite jamming, to coordinate swarms, and to accelerate the targeting workflow. The result of this innovation is not just a change in technology but a reconfiguration of coercive capabilities. Drone warfare is no longer the unique preserve of the world’s greatest military superpowers: the increasing ease and cheapness of manufacture means more parties are able to deploy the technology. Those who can effectively command and control such systems have the capacity to saturate airspace, to maintain territory, and to impose continuous exposure to aerial risk on forces below. As such, the technical development of drone warfare has rebalanced geopolitics, placing more power in the hands of smaller states and non-state actors – particularly in asymmetric or guerilla conflicts.

3) AI and Automation

The integration of AI into drone warfare consists not of one feature but a bundling of capabilities. Each additional capability shapes the power of the drone: each upgrade improves the ability to decide, to see, and to strike, allowing operators to apply lethal force against adversaries below.

a. Perception and targeting assistance

Computer vision models (AI algorithms, specifically deep neural networks, trained to interpret and analyse visual data) support object detection and classification (vehicles, artillery, and personnel clusters), tracking across frames to reduce analyst workloads, and the understanding of scenes for cueing and prioritisation work. Here, power increasingly operates through probabilistic classification: those who have access to the largest sets of data, or the most advanced methods of interpreting it, can most effectively utilise their drones in a combat situation.

b. Navigation and electronic warfare

One of the largest drivers of innovation in autonomy is GNSS interference (jamming and spoofing attacks). Unclassified surveys of UAV navigation in GPS-denied environments reveal methods like visual navigation, mapping, learning-based methods, and sensor fusion. Technical literature on GPS-resilient methods identifies visual-inertial, as well as LiDAR-visual-inertial fusion as main methods of maintaining continuous state estimation despite GNSS unavailability.

Ethically, this raises serious concerns. Weapons are designed to keep finding their way towards a target even when humans lose communication to the delivery platform or situational clarity. Putting that to one side, however, this is another demonstration of how technological development allows actors to exert material power. Those who can afford and have the expertise to make such developments have the ability to keep killing even when an opponent has disrupted communication and signals.

c. Multi-drone coordination and swarming

Swarming is best understood as the coordination of many drones under constraints. This includes three key components: swarming architecture and algorithms, and human-swarm teaming. Architectures include infrastructure-based control along with ad-hoc network swarms, while algorithms can prioritise leader-follower hierarchy, consensus behaviour, or decentralised task assignment; many of these are studied through multi-agent learning. Lastly, human-swarm teaming is the operation of increasing numbers of drones via higher-level command usage.

Recent work describes the use of multi-agent reinforcement learning (MARL) for swarm coordination and control. While cutting-edge MARL is not deployed everywhere today, a problem remains: research and procurement logic are all converging on scaling lethal capacity while diminishing human oversight. Thus swarming sees the power of the drone concentrated into an increasingly small pool of operators. A decision that might once have involved ten people now may involve half that number, or less – the power of authority over decision-making becomes organisationally compressed.

d. The autonomy boundary

The International Committee of the Red Cross (ICRC) defines autonomous weapons systems as ones that can select and apply (often lethal) force without human intervention after activation. It warns that users may not choose or even know the specific targets or the timing of an attack once the system begins operating from sensor inputs and generalised targeting profiles. There is no inherent issue with drones using AI somewhere; there does exist an inherent problem with a lack of human control over individual kill decisions. The power to decide whether someone lives or dies is no longer clearly attributable to an individual or an office; it has been diluted among a network of software designers, commanders, and the machines themselves. The complexity of drone warfare means that, while an increasingly small group of people are responsible for managing it, identifying a single individual responsible for a death is often difficult. This diffusion complicates responsibility doctrines under international humanitarian law. Power has become more concentrated, but simultaneously less accountable – take, for example, the US use of drones in the 21st century.

4) U.S. Drone Operations (2001–Present)

After 9/11, U.S. usage of drones became a semi-permanent infrastructure of surveillance and ‘targeted killing.’ A Congressional Research Service (CRS) brief notes that between 2010 and 2020, the US carried out over 14,000 drone strikes across Afghanistan, Pakistan, Yemen, and Somalia. There were two main technical features present among these drone strikes: persistence and remote lethality. Persistence means long loiter times, enabling pattern-of-life analysis (including ‘signature strikes’) that turns daily life into data for interpretation, sometimes pre-empting a strike. Remote lethality is strike authority, exercised far away from the battlefield (across borders) and sometimes through covert channels. Drones made the projection of force without corresponding domestic political risk much easier. This entrenched an asymmetric power system, lowering domestic political costs while keeping target populations under continuous surveillance and risk of death. Meanwhile, those authorising strikes remain insulated, physically and politically, from harm.

The American expansion of drone usage has been criticised by numerous international human rights organisations. Drone usage suffers from a continued (often intentional) transparency deficit; independent organisations have built casualty tracking because governments do not provide reliable and comprehensive accounting. Casualties are often understated, and counting methods are constrained by limited access and inconsistent reporting of missions and kills. Harm to civilians can be recurring in a system that prioritises speed and inference. At the legal level, UN reporting has directly addressed targeted killings by armed drones; structural ambiguity built into decision-distributional systems (across operators, analysts, lawyers, agencies, and software) is a form of protection for force-wielding institutions. The architecture of US drone operations is a striking contemporary example of how power can be lethally exercised across borders without corresponding accountability.

5) Ukraine (2022-present)

Ukraine has quickly become an effective if tragic demonstration of mass drone warfare. FPV drones, reconnaissance quadcopters, long-range strike UAVs, and maritime drones all operate in a dense environment rife with electronic warfare.

Ukraine’s Ministry of Defence has stated that their defence-industrial capacity in 2025 is approximately 4.5 million FPV drones, and plans to procure all of these drones. The tactical result of such staggering scale is a battlefield where drones are expendable precision munitions, Intelligence, Surveillance, Reconnaissance (ISR) nodes that feed artillery, and simultaneously an instrument for expanding lethal reach into spaces which were previously relatively safe. This industrialisation of cheap and networked drones redistributes power on the battlefield to smaller and lower-funded units: formations are able to exercise forms of violence once reserved for well-funded militaries.

Drone swarms are coordinated in Ukraine with regularity. In June 2025, Ukraine conducted Operation “Spider’s Web”, which relied on small drones launched from deep within Russia. The result was an effective demonstration of how massed low-cost systems and operational ingenuity can bypass traditional air-defence assumptions and systems. And even when coordination isn’t fully autonomous, the operational effect is still swarm-like; attacks are distributed, there are decoys, and adoption is rapid and iterative. Power is both tactical and symbolic: drones both stretch and confuse enemy defences and show the enemy as vulnerable at a distance.

The most valuable AI applications in Ukraine are often unglamorous, sitting in places like navigation aids, tracking assistance, and jamming resilience. Technical literature on autonomy in GPS-denied environments emphasises that drone survivability and effectiveness increasingly relies on sensor fusion and learned perception. The improved reliability of data-driven violence coupled with decreasing dependence on the limits of human stamina or perception imbues these incremental technical optimisations with increased power.

Civilian harm is manifest in these applications. Drones may be precision weapons, but short-range drones can be precise instruments of civilian death. The precision of a drone to detonate at a specific place and point in time does not guarantee that the persons finding themselves at that place and point are legitimate targets. The UN Human Rights Monitoring Mission in Ukraine reported in 2025 that short-range drones, including FPV-style ones, caused the highest number of civilian casualties during several months. The Kherson region has been particularly affected. When systems are cheap, numerous, and fast, civilian protection systems can degrade under conditions of rapid scale. Power, as experienced by civilians, is not abstract doctrine. Rather, it is a permanent risk – the lived reality that any visible movement or emission of signal may attract death from above.

6) Policy and Research Priorities

Across both case studies, three patterns emerge: a reduction of friction through automation, an increase in throughput, and a magnification in the scale of error. The warning by the ICRC is not abstract; autonomous weapons platforms can initiate strikes based on sensor data and general target profiles, causing civilian death. There is a political economy of remote power in remote war which incentivises efficient killing through autonomous weapons platforms imbued with AI capabilities, and this political economy decreases the capacity of those subjected to such force to resist it, contest it, or even understand it.

Corresponding to these three patterns are three important areas of policy. The first is operationalised and meaningful human control — not just a human in the loop, but an operator with time, information, and the authority to refuse a strike, especially when AI tools are involved. Second, auditability and disclosure must be included as protection mechanisms for civilians. Black-box targeting where opaque AI or bureaucracy are involved in kill decisions invites impunity. And lastly, technical innovation should prioritise restraint and reliability in situations of uncertainty. As work on GPS-denied navigation, sensor fusion, and swarm control accelerates, these capabilities must be paired with conservative engagement rules, robust standards of identification, and post-strike investigative requirements to preserve proportionality and distinction principles. Choosing to fund and reward slower and cautious capability is a political decision in and of itself, a decision about what forms of power are acceptable. We should choose to limit and account for the harm we cause, rather than prioritise the ability to kill quickly at scale.

The history of drone warfare shows that modern states seek power that is distant, deniable, and rises above the stamina and perception of human operators. At the same time, it shows how states seek to decouple themselves from responsibility for the exercise of this power through increasing layers of complexity, bureaucracy, and technical dilution or abstraction. Any serious policy response must therefore go beyond technical regulation to contesting who can watch, classify, and kill from above, and under what conditions it is normatively acceptable for them to do so.

Bibliography

Congressional Research Service. Armed Drones: Evolution as a Counterterrorism Tool. IF12342, 2023, www.congress.gov/crs-product/IF12342.

The Bureau of Investigative Journalism. Drone Warfare. Drone War Project, www.thebureauinvestigates.com/projects/drone-war.

New America. America’s Counterterrorism Wars. New America, www.newamerica.org/future-security/reports/americas-counterterrorism-wars/. Columbia Law School, Human Rights Institute. Counting Drone Strike Deaths. 2012, hri.law.columbia.edu/sites/default/files/publications/CountingDroneDeathsPresserFINAL.pdf Mwatana for Human Rights. Death by Drones: Civilian Harm Caused by U.S. Targeted Killings in Yemen. 2017, www.mwatana.org/reports-en/death-by-drones-7. United Nations Office of the High Commissioner for Human Rights. Use of Armed Drones for Targeted Killings. Report of the Special Rapporteur on Extrajudicial, Summary or Arbitrary Executions, A/HRC/44/38, Human Rights Council, 2020, www.ohchr.org/en/documents/thematic-reports/ahrc4438-use-armed-drones-targeted-killings report-special-rapporteur.

Rand Corporation. Clarifying the Rules for Targeted Killing: An Analytical Framework for U.S. Policy. RR-1610, Rand Corporation, 2016, www.rand.org/content/dam/rand/pubs/research_reports/RR1600/RR1610/RAND_RR1610.pdf

International Committee of the Red Cross. ICRC Position on Autonomous Weapon Systems. ICRC, www.icrc.org/en/document/icrc-position-autonomous-weapon-systems. Autonomous Weapons: Law and Policy Overview, ICRC, www.icrc.org/en/law-and-policy/autonomous-weapons.

Human Rights Watch. A Hazard to Human Rights: Autonomous Weapons Systems and Digital Decision-Making. 2025, www.hrw.org/report/2025/04/28/a-hazard-to-human-rights/autonomous-weapons-systems-and-digital-decision-making.

United Nations Human Rights Monitoring Mission in Ukraine. Protection of Civilians in Armed Conflict: January 2025. OHCHR, 2025, ukraine.ohchr.org/en/Protection-of-Civilians-in-Armed-Conflict-January-2025. United Nations Office at Geneva. “Short-Range Drones: The Deadliest Threat to Civilians in Ukraine.” UN Geneva News, Feb. 2025, www.ungeneva.org/en/news-media/news/2025/02/103208/short-range-drones-deadliest-threat-civilians-ukraine.

Picheta, Rob, and Reuters. “Drones Become Most Common Cause of Death for Civilians in Ukraine War, UN Says.” Reuters, 11 Feb. 2025, www.reuters.com/world/europe/drones-become-most-common-cause-death-civilians-ukraine war-un-says-2025-02-11/.

Reuters Graphics. “How Ukraine Pulled Off an Audacious Attack Deep Inside Russia.” Reuters, www.reuters.com/graphics/UKRAINE-CRISIS/DRONES-RUSSIA/mypmjzayyvr/. Chatham House. “Ukraine’s Operation Spider’s Web Is a Game-Changer for Modern Drone Warfare.” June 2025, www.chathamhouse.org/2025/06/ukraines-operation-spiders-web-game-changer-modern-drone-warfare-nato-should-pay-attention.

Center for Strategic and International Studies. “How Ukraine’s ‘Spider Web’ Operation Redefines Asymmetric Warfare.” June 2025, www.csis.org/analysis/how-ukraines-spider-web-operation-redefines-asymmetric-warfare. Ukraine Ministry of Defence. “In 2025, the Ministry of Defence Plans to Procure 4.5 Million FPV Drones.” 2025, mod.gov.ua/en/news/glib-kanievskyi-in-2025-the-ministry-of-defence-plans-to-procure-4-5-million-fpv-drones.

Al Jazeera. “Ukraine Announces Plan to Boost FPV Drone Arsenal.” Al Jazeera, 10 Mar. 2025,

www.aljazeera.com/news/2025/3/10/ukraine-announces-plan-to-boost-fpv-drone-arsenal. Defense Advanced Research Projects Agency. Collaborative Operations in Denied Environment (CODE). DARPA, www.darpa.mil/research/programs/collaborative-operations-in-denied-environment. OFFensive Swarm-Enabled Tactics (OFFSET). DARPA, www.darpa.mil/research/programs/offensive-swarm-enabled-tactics.

Zhang, Y., et al. “A Review of UAV Autonomous Navigation in GPS-Denied Environments.” Robotics and Autonomous Systems, vol. 168, 2023, ScienceDirect, www.sciencedirect.com/science/article/pii/S0921889023001720.

Nguyen, H. T., et al. “A Survey on UAV Control with Multi-Agent Reinforcement Learning.” Drones, vol. 9, no. 7, 2025, MDPI, www.mdpi.com/2504-446X/9/7/484. Liu, Y., et al. “Distributed Machine Learning for UAV Swarms: Computing, Sensing, and Communications.” arXiv, 2023, arxiv.org/pdf/2301.00912.

Wang, J., et al. “UAV Swarm Communication and Navigation Architectures.” Advances in Guidance, Navigation and Control, Springer, 2023, link.springer.com/chapter/10.1007/978-981-19-6613-2_232.

Shen, S., et al. “LiDAR–Visual–Inertial Sensor Fusion for UAV Localization.” Drones, vol. 8, no. 9, 2024, MDPI, www.mdpi.com/2504-446X/8/9/487.

History.com Editors. “Austria Attacks Venice with Balloon Bombs.” History.com, 22 Aug. 1849,

www.history.com/this-day-in-history/august-22/first-remote-aerial-bombing-austria-balloons 1849.

National Museum of the United States Air Force. “Kettering Aerial Torpedo ‘Bug.’” National Museum of the USAF, www.nationalmuseum.af.mil/Visit/Museum-Exhibits/Fact-Sheets/Display/Article/198095/ketaerial-torpedo-bug/.

de Havilland Aircraft Museum. “de Havilland DH.82B Queen Bee.” de Havilland Aircraft Museum, www.dehavillandmuseum.co.uk/aircraft/de-havilland-dh82b-queen-bee/. Neuman, Stephanie G. “Unmanned Aircraft in Israeli Air Operations.” Journal of Strategic Studies, JSTOR, www.jstor.org/stable/26271146.

Arkin, William M. “The Bekaa Valley War.” Air & Space Forces Magazine, June 2002, www.airandspaceforces.com/article/0602bekaa/.

Kipling, Rudyard. “R.A.F. (Aged Eighteen).” In Epitaphs of the War. https://www.kiplingsociety.co.uk/poem/poems_epitaphs.htm